Nvidia has recently made waves by stepping forward with the KAI Scheduler, an innovative open-source GPU scheduling solution designed specifically for Kubernetes. This move is not just about releasing software; it represents a paradigm shift in the way artificial intelligence workloads are managed. The implications of making the KAI Scheduler available under the Apache 2.0 license are profound and signal a new era of collaboration and transparency in the tech industry. As the boundaries evaporate around proprietary systems, the advent of open-source tools like KAI empowers smaller players to compete with the giants, igniting a frenzy of creativity and innovation in the AI space.

In our current era, having access to cutting-edge tools can be the key differentiator between success and failure. The traditional model of heavily guarded proprietary solutions often hinders collaboration and stifles innovation. Nvidia’s open-source initiative is a direct challenge to this status quo, inviting developers from all corners to contribute and innovate without the shackles of corporate secrecy. This is particularly exciting in the AI arena, where collective problem-solving is essential to tackle complex computational challenges.

A Game-Changer for Resource Management

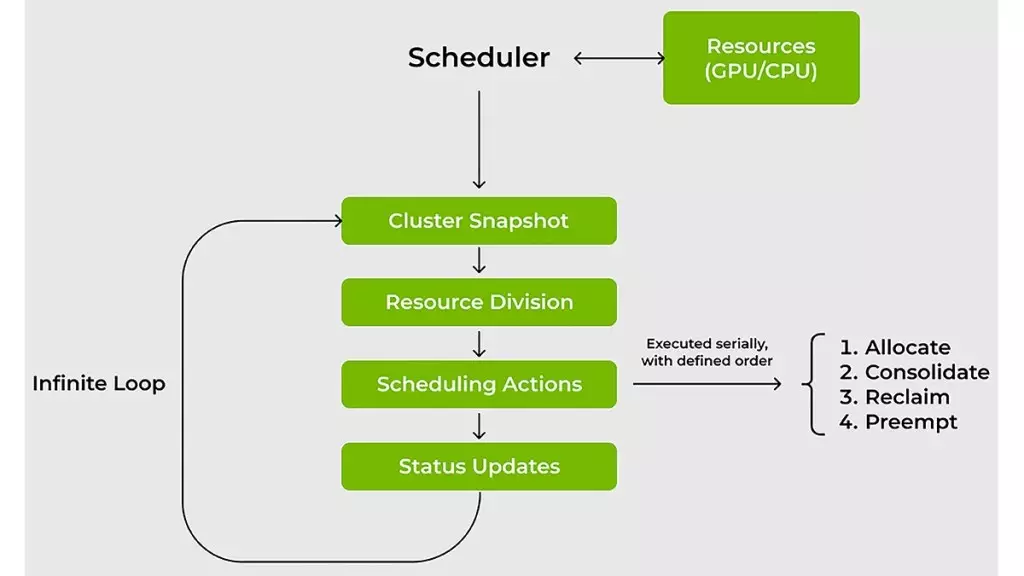

One of the standout features of the KAI Scheduler is its ability to streamline resource management, particularly for GPU and CPU allocations. For too long, IT teams have navigated a turbulent landscape riddled with inefficiencies caused by traditional scheduling systems. These legacy systems often feel like laborious voyages through murky waters, leaving teams uncertain and unproductive. With KAI Scheduler, however, teams can finally put those fears to rest.

The scheduler’s dynamic resource allocation model reimagines the management of fluctuating workloads. Unlike conventional schedulers that fixate on static allocations, KAI provides real-time adjustments based on demand, effectively eliminating the conventional bottlenecks that complicate efficient resource utilization. The agility afforded by KAI not only minimizes wait times— which are usually a crippling bottleneck—but also allows teams to invest their efforts in true innovation rather than micromanagement of resources.

Intelligent Resource Optimization

At the core of KAI Scheduler’s effectiveness is its dual strategy: bin-packing and task spreading. This nuanced approach directly addresses the pervasive issue of resource fragmentation in shared clusters. By intelligently consolidating smaller tasks onto CPUs and GPUs, the KAI Scheduler ensures that every ounce of computing capacity is utilized efficiently. In a world where every bit of performance counts, this optimization is nothing short of revolutionary.

Moreover, the architectural brilliance of spreading workloads evenly across nodes serves a dual purpose. Not only does it alleviate stress from overloaded servers, but it also fosters an environment of equitable sharing within teams. This approach discourages the disturbing trend of resource hoarding, often seen in environments where certain researchers cling to more GPUs than are necessary. Speaking as someone who has worked in collaborative settings, I can attest to the camaraderie that comes from ensuring fair access to resources—KAI Scheduler unlocks that potentially transformative collaborative spirit.

Simplifying Complexity for Greater Innovation

Navigating the various frameworks used in AI development such as Kubeflow or Ray can often feel like solving a Rubik’s cube; frustrating and often counterproductive. Traditional methods typically demand extensive manual configurations that derail the prototyping process. Enter Nvidia’s KAI Scheduler, with its built-in podgrouper, seamlessly integrating these tools into workflows, thus cutting down on complexity and ensuring that innovation can occur at a far more rapid pace.

By dissolving these traditional barriers, Nvidia has taken a definitive stand in cultivating an active and responsive developer community. It reiterates their commitment to an ecosystem where input is welcomed and valued, as opposed to an insulated environment dominated by a single corporation’s vision.

Fostering a Culture of Collaboration

The launch of the KAI Scheduler represents a monumental step in not just AI GPU scheduling but in cultivating a rich tapestry of collaboration and shared knowledge. Continuous feedback loops from developers will enhance the tool’s functionality, resulting in collective growth across the industry. Open-source projects like KAI Scheduler are not just about providing tools; they stimulate a culture that values shared growth and development over competitive exclusivity.

Nvidia’s forward-thinking strategy of embracing and promoting open-source models has set a formidable precedent that will likely reshape how tech giants approach AI infrastructure in the future. By breaking down the barriers that have long confined proprietary technology, Nvidia is championing an ecosystem ripe for innovation. Where once walls divided, bridges now connect diverse ideas and talents, creating fertile ground for groundbreaking advancements in AI technology.

Nvidia has pivoted not only to redefine resource scheduling but to reshape the very fabric of AI development—inviting collaboration, creativity, and expertise from all corners of the tech world.

Leave a Reply