In a bold move that signals a transformative shift within the tech industry, Sam Altman, CEO of OpenAI, announced plans to release a state-of-the-art open-weight artificial intelligence model in the coming months. This decision comes amidst a fierce competitive landscape dominated by players like DeepSeek and Meta, who have leveraged open-weight architectures to capture attention and build user loyalty. The profound implication of Altman’s revelation is that OpenAI recognizes the imperative to adapt; failure to do so would risk irrelevance in an era marked by an escalating demand for openness and flexibility in AI solutions. Altman’s candid remark, “We were on the wrong side of history,” speaks volumes about the company’s past hesitance to embrace open models.

The Rise of Open-Weight Models: A Response to Competitive Pressure

OpenAI’s pivot towards open-weight architecture is not merely a response to competitors; it is a necessary step to remain at the forefront of technological evolution. The excitement surrounding the release of DeepSeek’s R1 model and Meta’s Llama models emphasizes a sense of urgency. Developers are not just looking for powerful AI capabilities; they crave approaches that allow for greater customization, modification, and deployment on their terms. OpenAI, historically characterized by its cloud-centric solutions and fixed APIs, is now set to embark on a journey that empowers developers with an opportunity to personalize their interactions with AI technology.

This shift not only promises to enhance user experiences but also aims to democratize innovation, providing a wider range of creators with the tools they need to contribute meaningfully to the AI landscape. The question looming over this transition, however, is whether OpenAI can successfully navigate the challenges that accompany widespread accessibility.

Challenges and Ethical Considerations in Open AI

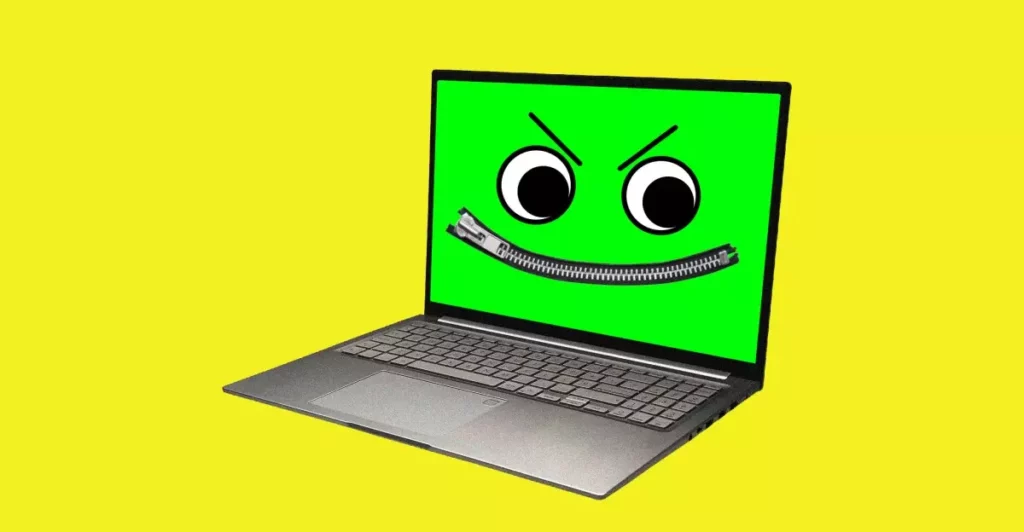

While the excitement around open-weight AI is palpable, it does not come without significant concerns. The notion that users will be able to run these models on their hardware raises red flags regarding potential misuse. Cybersecurity experts and ethicists alike are already sounding alarms about the implications of putting powerful AI tools into the hands of potentially malicious actors. OpenAI’s Steven Heidel acknowledges the need for careful testing to mitigate these risks, but skepticism remains regarding how effectively these safeguards can be enforced.

Johannes Heidecke, another OpenAI executive, has aptly highlighted the delicate balance between fostering innovation and ensuring safety. The AI research community is replete with cautionary tales about how unauthorized access to advanced technologies can lead to harmful outcomes. With open-weight AI models granting unprecedented capabilities, the specter of cyber offenses or even the development of harmful biological applications looms large. It places a moral obligation on OpenAI to tread carefully as it expands the accessibility of its technology.

Community Collaboration: A Double-Edged Sword

As part of this new initiative, OpenAI has encouraged developers to apply for early access, a strategic move that not only fosters community engagement but also allows for crucial feedback loops to refine the model prior to a wider release. OpenAI’s commitment to co-creation signifies an emerging paradigm in AI development that seeks input from various stakeholders. However, while collaborative innovation is invaluable, there is an inherent risk: the potential complications that arise from myriad contributions could lead to inconsistencies or vulnerabilities within the product.

Moreover, OpenAI must remain vigilant in how it addresses concerns surrounding transparency in dataset sourcing and the licensing requirements for third-party applications. Meta has frequently faced scrutiny in this area, highlighting a need for OpenAI to build and maintain trust amid its transition into the open-weight space. A commitment to authenticity and collaboration will be crucial—not only for user trust but also for navigating the complex commercial landscape.

The Road Ahead: Embracing Open-Weight AI with Caution

The path that OpenAI is embarking upon is fraught with challenges yet loaded with opportunities. A strategic commitment to open-weight AI has the potential to redefine the contours of the industry. By integrating user-fueled innovation with robust ethical safeguards, OpenAI could become a beacon of progress amidst the complexities of technology today. It is, however, critical that the organization maintains a vigilant stance against the potential pitfalls of this approach.

As the industry watches intently, OpenAI’s resolve to embrace this new model could herald an era of unprecedented innovation in artificial intelligence. The real question remains: can they harness this openness responsibly, all while protecting society from the potentially dire consequences of powerful technology?

Leave a Reply