The realm of artificial intelligence is a hotspot of innovation, teeming with promises from tech executives about a future dominated by Artificial General Intelligence (AGI). Unfortunately, much of this excitement is shrouded in hype. True AGI, capable of human-like reasoning and understanding, remains a distant objective. Today’s AI systems, albeit impressive in their capabilities, still operate within significant limitations that necessitate ongoing refinement. The narrative surrounding AGI has led many to believe we are on the brink of a revolutionary breakthrough, yet the reality is that we are still navigating a complex landscape filled with technical obstacles and ethical considerations.

Instead of rushing toward an uncertain future, we must critically engage with the current state of AI and recognize that its maturation requires patience, diligence, and responsible oversight. Amid this backdrop, companies like Scale AI emerge as crucial players in fostering responsible AI development while championing enhanced training methodologies.

Scale AI’s Role in Revolutionizing Training Methodologies

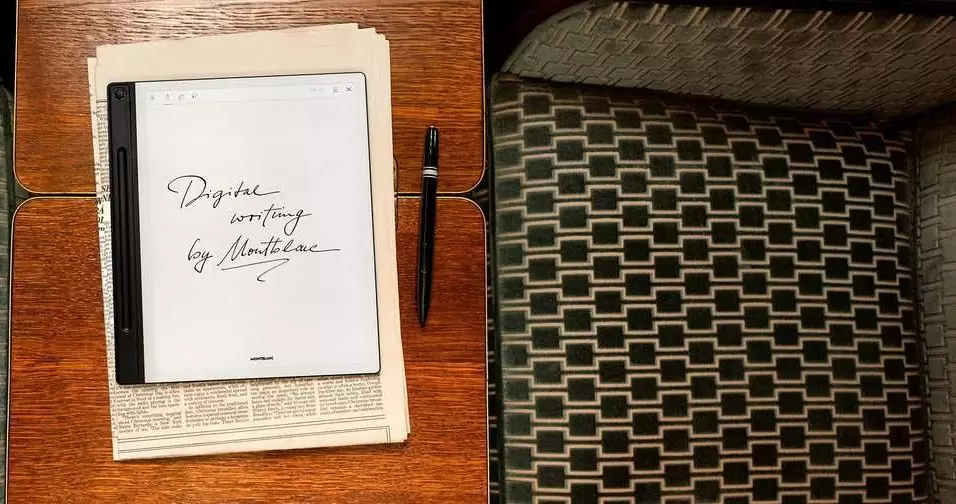

Scale AI stands as a transformative force in the AI training ecosystem. Through its innovative platform, it is not merely aggregating data but revolutionizing how AI models are assessed and refined. The introduction of Scale Evaluation is particularly noteworthy; it represents a monumental shift toward an automated evaluation process that utilizes advanced machine-learning algorithms. This system reduces the reliance on human evaluators and offers a more consistent and objective means to gauge a model’s performance.

The appeal of Scale Evaluation lies in its ability to highlight weaknesses that require further training. This approach encourages developers to dissect results, pinpoint areas needing improvement, and implement targeted data campaigns. However, even as this automated system enhances efficiency, it raises other questions about potentially diminishing the nuance that human analysis offers. Are we sacrificing the insights that come from human evaluators in favor of algorithmic efficiency? This tension between efficiency and depth remains a critical consideration in AI advancement.

Unveiling Performance Gaps: A New Frontier in AI Reasoning

Front and center in the capabilities nurtured by Scale Evaluation is the crucial aspect of reasoning. Effective AI reasoning is not merely algorithmic execution but involves profound comprehension of complex issues. It’s about breaking down multifaceted problems into digestible components—facilitating solutions that resonate with real-world applications.

A striking revelation from Scale Evaluation was the discovery of significant drops in reasoning abilities for models dealing with non-English prompts. While these models could handle English inputs with relative ease, they stumbled dramatically with prompts in other languages. This inconsistency underscores a pressing issue: the need for diverse training datasets. AI cannot advance if its training remains skewed toward a predominantly English-speaking framework. Are we complicit in stifling the potential of AI to serve global audiences because of our limited focus?

Setting New Benchmarks for AI Accountability

The evolution of Scale AI is emblematic not only of its commitment to refining individual models but also of establishing new benchmarks that push AI capabilities forward. Tools like EnigmaEval, MultiChallenge, and MASK are designed with rigorous evaluations in mind, scrutinizing various potential outcomes to champion not just progress but ethical considerations in AI development.

The absence of standardized evaluation methods in AI testing creates an environment ripe for vulnerabilities, and that concern cannot be understated. As AI systems become increasingly integrated into our lives, understanding their limitations becomes vital. A chaotic landscape of testing methodologies could lead to unaddressed model inconsistencies. The collaboration between Scale AI and the U.S. National Institute of Standards and Technology marks a significant stride towards creating a robust framework for AI accountability. This partnership emphasizes safety and trustworthiness, key attributes that any AI system must embody.

Fostering a Culture of Vigilance and Transparency

The discussion surrounding errors and blind spots in generative AI cannot be overlooked. It’s imperative for stakeholders across various industries to adopt a vigilant approach to identifying shortcomings. By fostering a culture of transparency and continuous improvement, responsible AI governance becomes achievable. Ethical standards must weave through the fabric of AI development alongside innovation, reinforcing that technology should serve humanity’s best interests.

As we move forward into this new era of AI accountability, it is vital to ensure that our pursuit of innovation is anchored in principles that promote not just progress, but also ethical integrity. Scale AI’s initiatives foreshadow a transformative journey in AI research and development—one that could potentially reshape our relationship with artificial intelligence for generations to come.

Leave a Reply