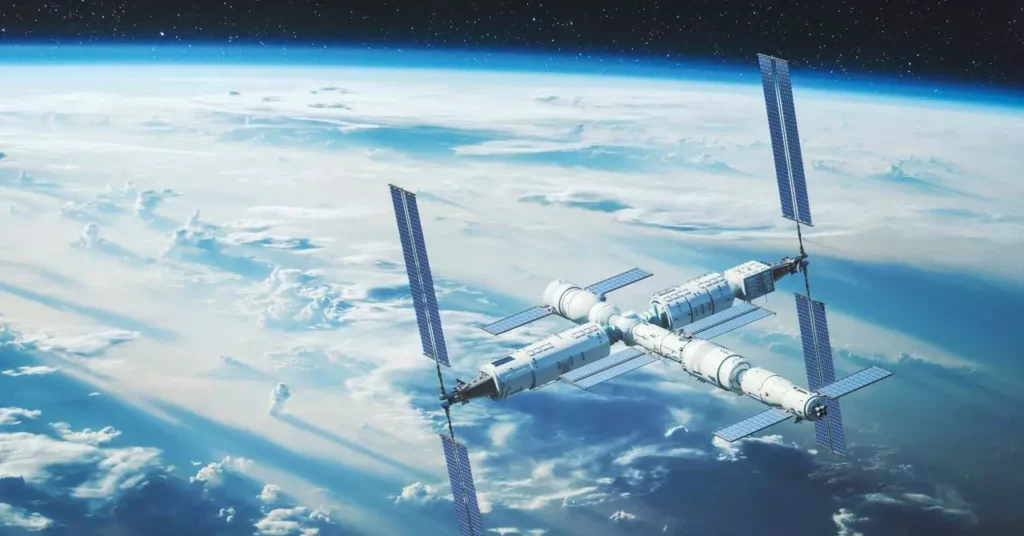

China’s latest venture into artificial intelligence onboard its Tiangong space station, Wukong AI, signals a bold step—yet it is riddled with overconfidence and problematic assumptions. The Chinese government touts Wukong as an innovative fusion of machine learning and aerospace expertise, but beneath this shiny veneer lies an ambitious myth-making exercise rooted in nationalist pride. There is a subtle danger in celebrating technological milestones while ignoring fundamental flaws in trustworthiness, autonomy, and strategic implications. The narrative manages to sound impressive—”game-changing” even—yet it conceals a fragile foundation of untested assumptions about AI’s capacity to operate reliably in the unforgiving environment of space.

In reality, Wukong AI’s debut demonstrates how political aspirations often drive technological development, often leading to superficial displays of progress rather than genuine mastery. While the Chinese space agency emphasizes Wukong’s functions—support during orbital walks, fault diagnosis, and communication—these claims gloss over inherent techno-optimism. They are the surface of a more tangled truth: that deploying AI in such a critical setting exposes vulnerabilities, especially when the system is built on open-source models that may not have been adequately hardened or security-optimized for high-stakes scenarios.

False Confidence in AI’s Capabilities

The promotional narrative surrounding Wukong AI feeds a dangerous misconception—that artificial intelligence, even in high-stakes environments like space, can seamlessly augment human effort and serve as an infallible guardian of crew safety. The truth is, as many critics have pointed out, that current AI systems are still fundamentally imperfect, susceptible to errors, misinterpretations, and, in worst-case scenarios, compromised by cyberattacks. China’s decision to rely on an open-source model further exacerbates these risks, as open-source code, while transparent, is also more vulnerable to malicious exploitation if not meticulously secured and validated.

We should question whether Wukong AI’s deployment is driven more by political symbolism than by a comprehensive risk assessment. The statement that it was designed “to meet the requirements of manned space missions” sounds promising, but history shows that AI can mislead even the most technically sophisticated operators when deployed outside well-controlled environments. Space, with its harsh radiation, extreme conditions, and communication delays, presents challenges that few current AI systems can withstand with full reliability. In this context, overreliance on such tools could become a ticking clock for crew safety—a risk that China, willingly or not, is embracing in pursuit of national prestige.

The Myth of Autonomous Space Operations

Admittedly, Wukong AI’s integration signals a shift towards more autonomous operations, but the current narrative exaggerates its independence. While its combination of ground analysis and onboard support appears impressive, it remains a fundamentally supervised system—one that depends heavily on ground control for validation and oversight. This artificially crafted narrative of “self-sufficiency” is a propaganda vehicle more than a reality check. The actual operational reliability of Wukong, especially during emergencies, remains largely untested.

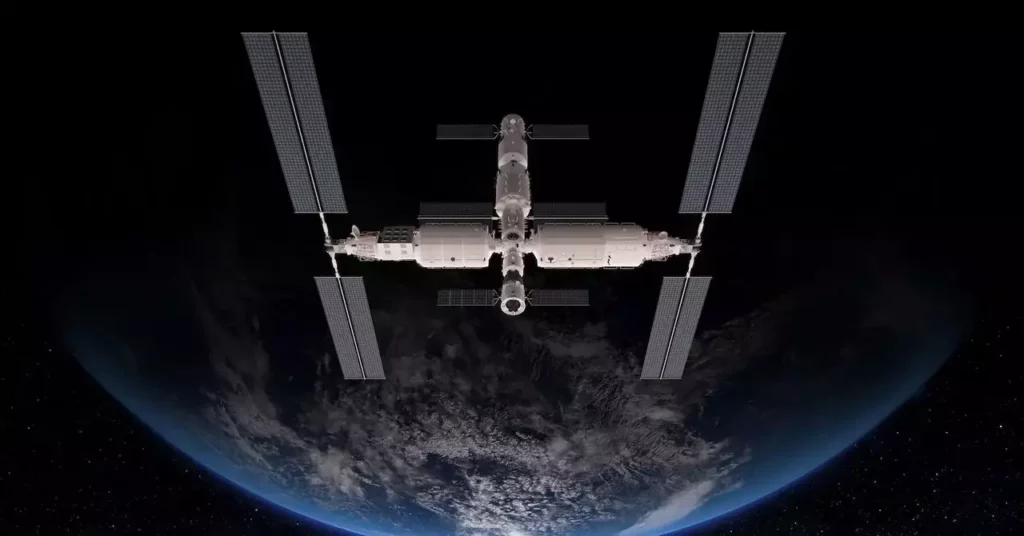

Furthermore, equating Wukong with other space AI systems like NASA’s CIMON or the robot assistant Astrobee fails to recognize the specific risks involved in China’s strategic ambitions. While Western agencies have approached AI deployment with cautious incremental steps, China has often preferred overt demonstration with symbolic milestones—regardless of whether those steps stand robust against the chaos of space. This ever-present temptation to showcase technological prowess risks distracting from the more pressing need for proven, resilient safety systems.

The Political Edge—A Machine for National Power

In the grand scheme, the Wukong AI project is less about technological innovation and more about geopolitics. As China endeavors to reaffirm its position as a true space power, each demonstration—be it a spacewalk supported by AI or a lunar landing—serves as a headline in a narrative of national ascendancy. This obsession with signaling strength can obscure the practical limitations of current systems and distract policymakers from addressing fundamental shortcomings.

The myth of Sun Wukong symbolizes cunning and resilience, but the real question is whether China’s AI ambitions are rooted in genuine technical mastery or just symbolic bravado. Historically, nations that have overpromised in space technology often stumble when faced with unpredictable realities. While the Chinese government touts Wukong as a “game-changer,” the reality is more nuanced: their focus may be better directed toward building sustainable, secure, and reliable systems rather than flashy symbols of progress meant to burnish national pride.

In sum, China’s deployment of Wukong AI exemplifies a broader tendency—hype over substance, bravado over robustness. The space environment demands humility, not hubris, and it is essential to remain critical of claims that promise the future without acknowledging the perilous ground upon which such claims are built.

Leave a Reply